How to increase SQL Database Full Backup speed using compression and Solid State Disks

The SQL 2008 Database backup compression feature (which was introduced as a SQL2008 Enterprise Edition only feature) will become available in the SQL2008 R2 Standard Edition also ( SQL 2008 R2 Editions). I’m using the compression feature for quite some time now and really love it; it saves significant amounts of disk space and increases the backup throughput. And when such a great feature is available I would recommend you to use it! In the following walk through I will show you some simple tricks to speed up the backup throughput.

How fast can we run a Full Backup on a single database with a couple of billion rows of data, occupying a couple hundred Gigabytes of disk space, spread across multiple Filegroups?

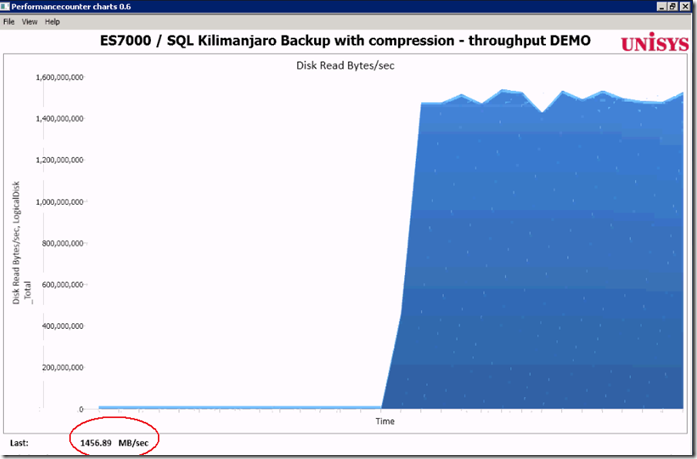

Of course your server will use some extra CPU cycles to compress the data on the fly and will use the maximum available IO bandwidth. For that purpose I tested on some serious hardware with plenty of both: a 96 core Unisys ES7000 model 7600R with 2 DSI Solid State Disk units who deliver a total of 6+ GB/sec IO throughput.

Getting started with Database Backup Compression

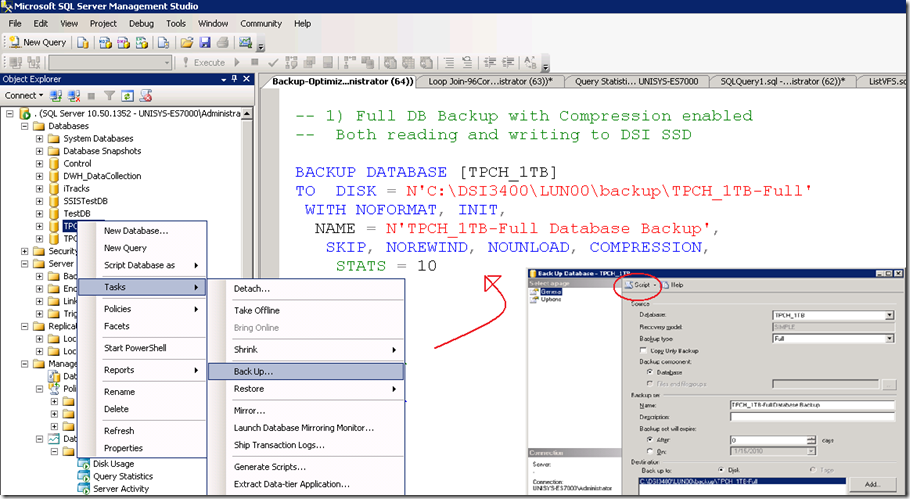

Database backup compression is disabled by default and it can be enabled through the SSMS GUI or by adding the word “COMPRESSION” to a T-SQL backup query.

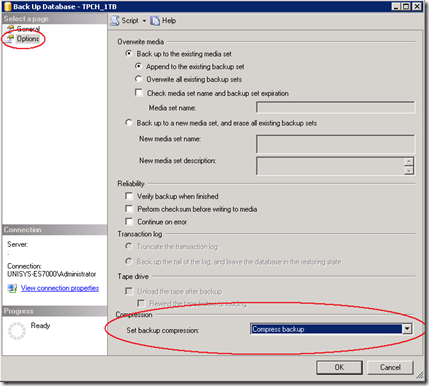

Through SSMS, when you -right click- the database which you would like to backup, under –Tasks- , -Back Up…-, -Options-, at the bottom , you will find the Compression feature. (see pictures)

After you have selected the “Compress Backup” option, click the –Script- Option to generate the TSQL statement. Please note that the word COMPRESSION is all you need to enable the feature from your backup query statement.

To enable backup compression feature for all your databases as the default option, change the default with the following sp_configure command:

GO

EXEC sp_configure backup compression default, '1';

RECONFIGURE WITH OVERRIDE

Step1 : Measure the “Out of the box” throughput

By running the above query, as a result, more than 1400 MByte/sec is read on average from both the DSI Solid State disks. That’s like reading all data from 2 full cd-rom’s each second. Not bad!

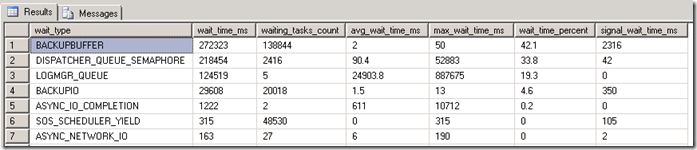

Waitstats

The SQL Waitstats show that the number 1 wait_type is BACKUPBUFFER.

The MSDN explanation for the BACKUPBUFFER wait_type is interesting: “the backup task is waiting for data or is waiting for a buffer in which to store data. This type is not typical, except when a task is waiting for a tape mount”. ehhh ok… since we are not using tapes , it means that this is not typical ! Let’s see what we can find out about the buffers.

Traceflag

To get more insights in the backup settings there are 2 interesting traceflags. With these traceflags enabled the actual backup parameters are logged into the SQL “Errorlog “.

DBCC TRACEON (3213, –1)

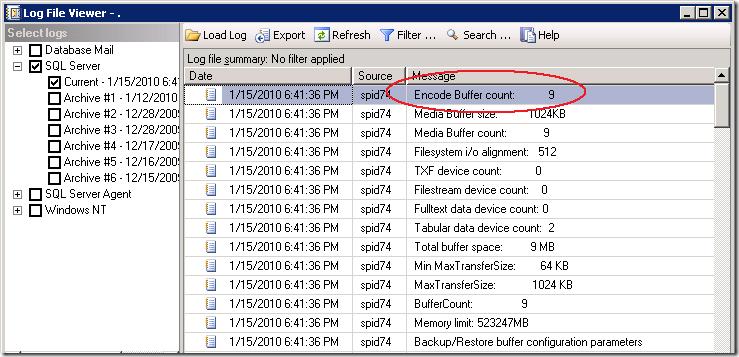

The Errorlog show that our backup was using 9 Buffers and allocated 9 Megabyte of buffer space.

9 MByte seems a relative low value to me to queue up all the destination backup file data and smells like an area where we can get some improvement, (especially since we have we have 16 x 4Gbit fiber cables and 6+ GigaByte/sec of IO bandwidth waiting to get saturated ;-) ).

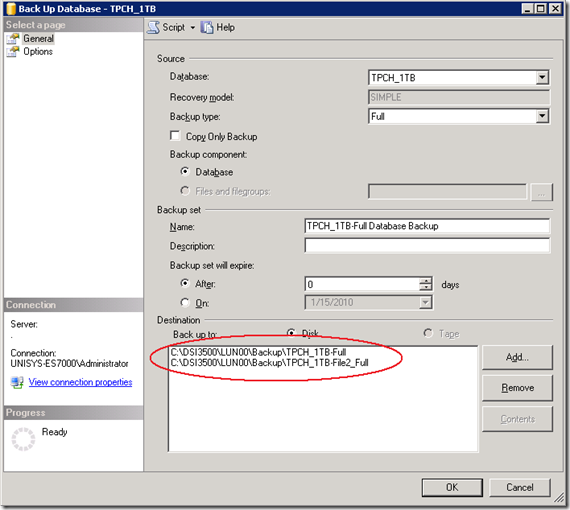

Step2: Increase throughput by adding more destination files

A feature that not many people know is the option to specify multiple file destinations to increase the throughput:

To add multiple Backup destination files in the query, add them like this:

DBCC TRACEON (3605, –1)

DBCC TRACEON (3213, –1)

BACKUP DATABASE [TPCH_1TB]

TO

DISK = N'C:\DSI3400\LUN00\backup\TPCH_1TB-Full',

DISK = N'C:\DSI3500\LUN00\backup\File2',

DISK = N'C:\DSI3500\LUN00\backup\File3',

DISK = N'C:\DSI3500\LUN00\backup\File4',

DISK = N'C:\DSI3500\LUN00\backup\File5',

DISK = N'C:\DSI3400\LUN00\backup\File6',

DISK = N'C:\DSI3500\LUN00\backup\File7',

DISK = N'C:\DSI3500\LUN00\backup\File8',

DISK = N'C:\DSI3500\LUN00\backup\File9'

WITH NOFORMAT, INIT,NAME = N'TPCH_1TB-Full Database Backup',

SKIP, NOREWIND, NOUNLOAD, COMPRESSION, STATS = 10

DBCC TRACEOFF(3605, –1)

DBCC TRACEOFF(3213, –1)

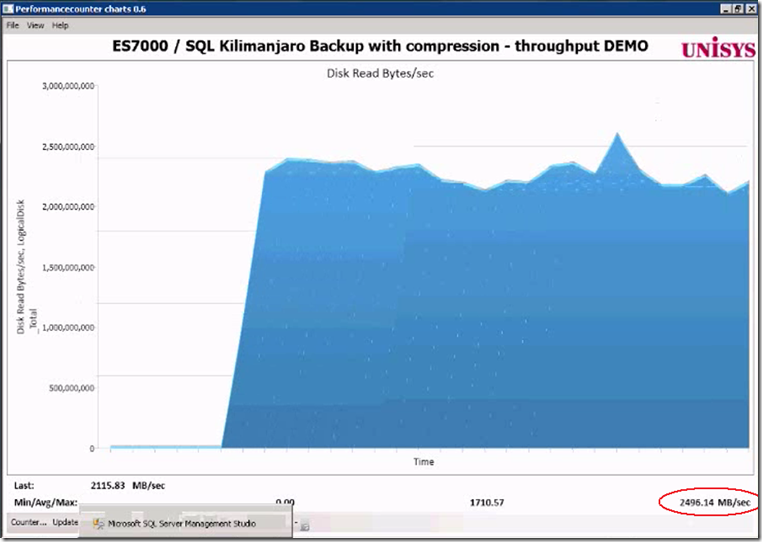

Result of adding 8 more destination files: the throughput increases from 1.4 GB/sec up to 2.2- 2.4 GB/sec.

That’s a quick win to remember!

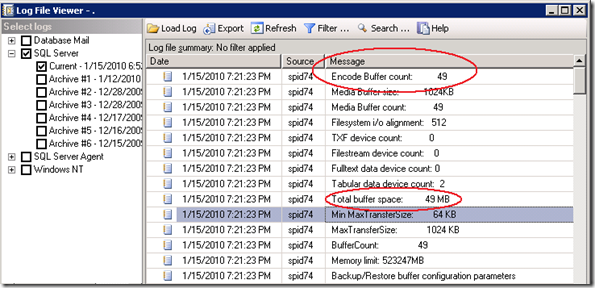

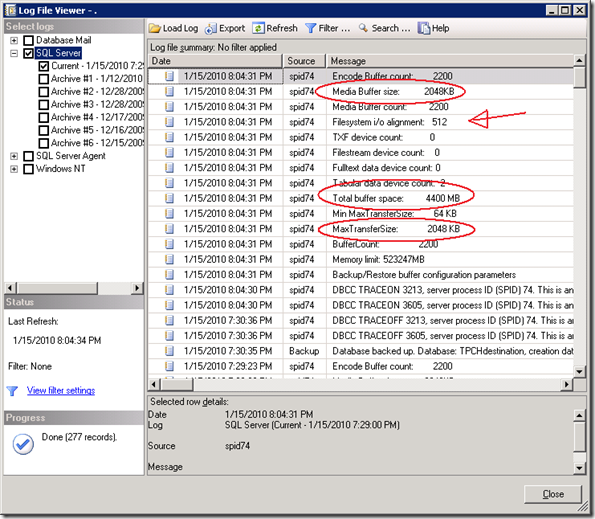

The SQL Errrorlog with the buffer configuration parameters shows that extra buffers are added automatically.

Step3 : Set Backup parameters options

The Backup/Restore buffer configuration parameters show some interesting parameters and values!

Time to start reading the ehh documentation! When you highlight the word ‘Backup’ and you hit <Shift-F1> you can read more on the topic; there are 2 Data Transfer Options listed :

BUFFERCOUNT and MAXTRANSFERSIZE.

BUFFERCOUNT : specifies the total number of I/O buffers to be used for the backup operation.

The total space that will be used by the buffers is determined by: buffercount * maxtransfersize.

The output shows this is correct; with 49 buffers * 1024 KB = 49 MB total buffer space is in use.

MAXTRANSFERSIZE : specifies the largest unit of transfer in bytes to be used between SQL Server and the backup media.

The possible values are multiples of 64 KB ranging up to 4194304 bytes (4 MB). The default is 1 MB.

a 3rd option is listed under the section Media Set Options:

Trial and Measure approach

DBCC TRACEON (3605, –1)

DBCC TRACEON (3213, –1)

BACKUP DATABASE [TPCH_1TB]TO

DISK = N'C:\DSI3400\LUN00\backup\TPCH_1TB-Full',

DISK = N'C:\DSI3500\LUN00\backup\File2',

DISK = N'C:\DSI3500\LUN00\backup\File3',

DISK = N'C:\DSI3500\LUN00\backup\File4',

DISK = N'C:\DSI3500\LUN00\backup\File5',

DISK = N'C:\DSI3400\LUN00\backup\File6',

DISK = N'C:\DSI3500\LUN00\backup\File7',

DISK = N'C:\DSI3500\LUN00\backup\File8',

DISK = N'C:\DSI3500\LUN00\backup\File9'

WITH NOFORMAT, INIT,NAME = N'TPCH_1TB-Full Database Backup',

SKIP, NOREWIND, NOUNLOAD, COMPRESSION,STATS = 10

— Magic:

,BUFFERCOUNT = 2200

,BLOCKSIZE = 65536

,MAXTRANSFERSIZE=2097152

GO

DBCC TRACEOFF(3605, –1)

DBCC TRACEOFF(3213, –1)

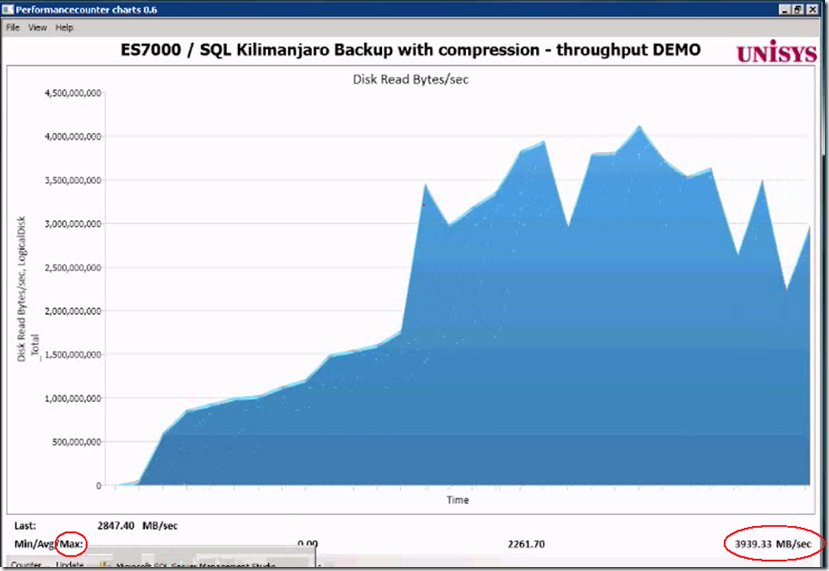

Result: the average throughput is more then doubled, and the maximum peak throughput is up to 3939 MB/sec !

BLOCKSIZE

An interesting observation is that I was more less expecting to see that by changing the BLOCKSIZE would also show up in the log, but it doesn’t .

Also the “Filesystem I/O alignment” value remained the same:

By coincidence, (I forgot to delete the backup files when adding some more destination files,) I found out that the blocksize value does make a change by looking at the error message:

Cannot use the backup file 'C:\DSI3500\LUN00\dummy-FULL_backup_TPCH_1TB_2' because

it was originally formatted with sector size 65536

and is now on a device with sector size 512.

Msg 3013, Level 16, State 1, Line 4

BACKUP DATABASE is terminating abnormally.

By specifying a large 64KB sector size instead of the default 512 bytes typically shows an 5-6% improvement in backup throughput.

BACKUP to DISK = ‘NUL’

To estimate and check how fast you can read the data from a database or Filegroup there is a special option you can use to backup to: DISK = ‘NUL’. You only need 1 of those !

FILEGROUP = 'SSD3500_0',

FILEGROUP = 'SSD3500_1'

TO DISK = 'NUL'

WITH COMPRESSION

, NOFORMAT, INIT, SKIP, NOREWIND, NOUNLOAD

, BUFFERCOUNT = 2200

, BLOCKSIZE = 65536

, MAXTRANSFERSIZE=2097152

Wrap-Up

Backup compression is a great feature to use. It will save you disk capacity and reduce the time needed to backup your data. SQL can leverage the IO bandwidth of Solid State storage well but to achieve maximum throughput you need to do some tuning. By adding multiple backup destination files and specifying the BUFFERCOUNT, BLOCKSIZE and MAXTRANSFERSIZE parameters you can typically double the backup throughput, which means reducing the backup time-taken by half!

11 Responses Leave a comment

Hi Henk,

It’s an amazing performance. good work. realy appreciated.

best regards

Loga

(Met you in Datacenter migration seminar on 02/02/10 at Microsoft London office)

Hi Henk,

Nice article!!!

Thanks,

Saravanan T

Thanks for a very well thought out and written article. Also, I wish I had your testing environment! ;)

Hi Henk

Thought I would leave a note this time, having used this post over and over so many times the past 18 months.

When I have discussions with customers on backup issues it is a great reference.

They often tell me that the SQL Server backup is slow and often list this as the reason why they use 3rd party tools. So far I have managed to turn over a good third of the customers I challenge, a pretty good success rate.

But the credit goes to you and this excellent blogpost.

I have seen some of your sessions on our own conference Miracle Open World and just wanted to give you credit for the excellent work you have done sofar.

Bye Bye Henk and take care

Best regards

Michael Torpegart

SQL Server Specialist

Miracle A/S

Denmark

Hi Michael, glad to hear!

typically a backup task would run in the background with minimial interference to the overall server load; this post is just to show how fast you can backup/restore if needed!

– Henk

Hi Henk,

I’m trying to reduce a time backup on the specific database (Size 1To with 20Go for Filestream). Actually, if I used the standard backup parameter means without to define BUFFERCOUNT, BLOCKSIZE and MAXTRANSFERSIZE (only one file destination), it tooks 3H18. When I used the standard backup parameter but by adding multiple backup destination files (4 files), it tooks 2H00 (I won 1H18). Ok, now I tried to run the backup by adding multiple backup destination files and specifying the BUFFERCOUNT, BLOCKSIZE and MAXTRANSFERSIZE (BUFFERCOUNT = 2200, BLOCKSIZE = 65536 , MAXTRANSFERSIZE=2097152). I got error (I/O request taking longer then 15 sec to complete on file, where file is file that I was to backup). Another thing, I have a hight consommation of CPU % (100% sometime). Here some information about the Virtual SQL server (VMWARE 5.1) : W2K8 R2, SQL 2008 R2 Enterprise Edition 64 SP2, 16 CPU, 64Go of RAM with 50Go reserved for SQL. The disk size is 4To (Raw Device connected via Controller PVSCSI). I aligned the disk too (Starting offset 65536, Byte by cluster 65536, byte by sector 512). Could you help me to understand hight CPU usage and error ?

Thanks,

Eric

Hi Eric,

Good to hear! 1TB/2 hours == 142 MByte/sec on average; this isn’t a huge number?

Do you know how many spindles are allocated to your SQL environment?

When IO’s take longer than 15 seconds to complete you probably consume all available throughput of the physical spindles and/or IO bandwith because of the additional writing into the database backup files? (or maybe check if an antivirus or backup driver is in the way?)

Have you tried lowering the IO pressure by allocating less buffers and less backup destination files?

if you would like to dig deeper to understand where you spend the cpu ticks, please check the SQL waitstats + spinlockstats DMV’s.

(Since the release of win2k8 the partition alignment is set correct automatically, as far as I’m aware )

-Henk

Hi Henk,

I know since the release of w2k8 R2, the partition alignement is set correct automatically but when I discussed with SAN support (like HITACHI) all the time they adviced to make the partition alignement.

Anyway, we used EMC CX4 SAN STORAGE. Basically, I have to ask more information about Lun (how many SPINDLE, which kind of disk architecture, brocade bandwith, …), is it correct ?

When I defined multiple backup destination files (4 files) but not specified the BUFFERCOUNT, BLOCKSIZE and MAXTRANSFERSIZE I had not CPU problem.

Thanks,

Eric

Hi Vijay, hope you found the answer already; but its like:

RESTORE DATABASE [TPCH_1TB]

FROM

DISK = N’C:\DSI3601\LUN01\backup\TPCH_1TB-Full’,

DISK = N’C:\DSI3601\LUN01\backup\File2′,

DISK = N’C:\DSI3601\LUN01\backup\File3′,

DISK = N’C:\DSI3601\LUN00\backup\File4′,

..

DISK = N’C:\DSI3601\LUN00\backup\Filex′

WITH FILE = 1, NOUNLOAD, REPLACE

, STATS = 10

GO

or search my blog for the keyword ‘Restore’ for more info! good luck

-Henk

Hi All,

Just thought I’d let you know that tuning the backups up to run this fast caused some issues for me. Using the buffercount of 2200 resulted in saturation of the data drive, which caused SQL to report 15 second waits on IO. I lowered buffercount to 500 and eliminated the errors, but still had a great improvement in backup time by a factor of 6.

Best wishes,

Sam

Thanks Sam! 6x faster is a great result if all data stored on the same data disk